Documentation

¶

Documentation

¶

Index ¶

- Variables

- func ToolAddToPrompts(t ToolApi, prompts ...PromptApi)

- type A

- type Agent

- func (a *Agent) BaseDBReadyEnd(e *am.Event)

- func (a *Agent) BaseDBSavingEnter(e *am.Event) bool

- func (a *Agent) BaseDBSavingState(e *am.Event)

- func (a *Agent) BaseDBStartingState(e *am.Event)

- func (a *Agent) BaseQueries() *db.Queries

- func (a *Agent) BuildOffer() string

- func (a *Agent) CheckingOfferRefsEnter(e *am.Event) bool

- func (a *Agent) CheckingOfferRefsState(e *am.Event)

- func (a *Agent) Init(agent AgentAPI) error

- func (a *Agent) InterruptedState(e *am.Event)

- func (a *Agent) Log(txt string, args ...any)

- func (a *Agent) Logger() *slog.Logger

- func (a *Agent) Mach() *am.Machine

- func (a *Agent) MsgEnter(e *am.Event) bool

- func (a *Agent) OpenAI() *instructor.InstructorOpenAI

- func (a *Agent) Output(txt string, from shared.From) am.Result

- func (a *Agent) PromptEnd(e *am.Event)

- func (a *Agent) PromptEnter(e *am.Event) bool

- func (a *Agent) PromptState(e *am.Event)

- func (a *Agent) RequestingExit(e *am.Event) bool

- func (a *Agent) RequestingLLMEnd(e *am.Event)

- func (a *Agent) RequestingLLMEnter(e *am.Event) bool

- func (a *Agent) RequestingLLMExit(e *am.Event) bool

- func (a *Agent) RequestingToolEnd(e *am.Event)

- func (a *Agent) RequestingToolEnter(e *am.Event) bool

- func (a *Agent) RequestingToolExit(e *am.Event) bool

- func (a *Agent) ResumeState(e *am.Event)

- func (a *Agent) SetMach(m *am.Machine)

- func (a *Agent) SetOpenAI(c *instructor.InstructorOpenAI)

- func (a *Agent) Start() am.Result

- func (a *Agent) StartEnter(e *am.Event) bool

- func (a *Agent) StartState(e *am.Event)

- func (a *Agent) Stop(disposeCtx context.Context) am.Result

- type AgentAPI

- type Document

- type Prompt

- func (p *Prompt[P, R]) AddDoc(doc *Document)

- func (p *Prompt[P, R]) AddTool(tool ToolApi)

- func (p *Prompt[P, R]) AppendHistOpenAI(msg *openai.ChatCompletionMessage)

- func (p *Prompt[P, R]) Generate() string

- func (p *Prompt[P, R]) HistCleanOpenAI()

- func (p *Prompt[P, R]) HistOpenAI() []openai.ChatCompletionMessage

- func (p *Prompt[P, R]) MsgsOpenAI() []openai.ChatCompletionMessage

- func (p *Prompt[P, R]) Run(e *am.Event, params P, model string) (*R, error)

- type PromptApi

- type PromptSchemaless

- type S

- type Tool

- type ToolApi

Constants ¶

This section is empty.

Variables ¶

View Source

var ParseArgs = shared.ParseArgs

View Source

var Pass = shared.Pass

Functions ¶

func ToolAddToPrompts ¶

Types ¶

type Agent ¶

type Agent struct {

*am.ExceptionHandler

*ssam.DisposedHandlers

// UserInput is a prompt submitted the user, owned by [schema.AgentStatesDef.Prompt].

UserInput string

// OfferList is a list of choices for the user.

// TODO atomic?

OfferList []string

// Messages

Msgs []*shared.Msg

// contains filtered or unexported fields

}

func (*Agent) BaseDBReadyEnd ¶ added in v0.2.0

func (*Agent) BaseDBSavingEnter ¶ added in v0.2.0

func (*Agent) BaseDBSavingState ¶ added in v0.2.0

func (*Agent) BaseDBStartingState ¶ added in v0.2.0

func (*Agent) BaseQueries ¶ added in v0.2.0

func (*Agent) BuildOffer ¶ added in v0.2.0

func (*Agent) CheckingOfferRefsEnter ¶ added in v0.2.0

func (*Agent) CheckingOfferRefsState ¶ added in v0.2.0

func (*Agent) Init ¶ added in v0.2.0

Init initializes the Agent and returns an error. It does not block.

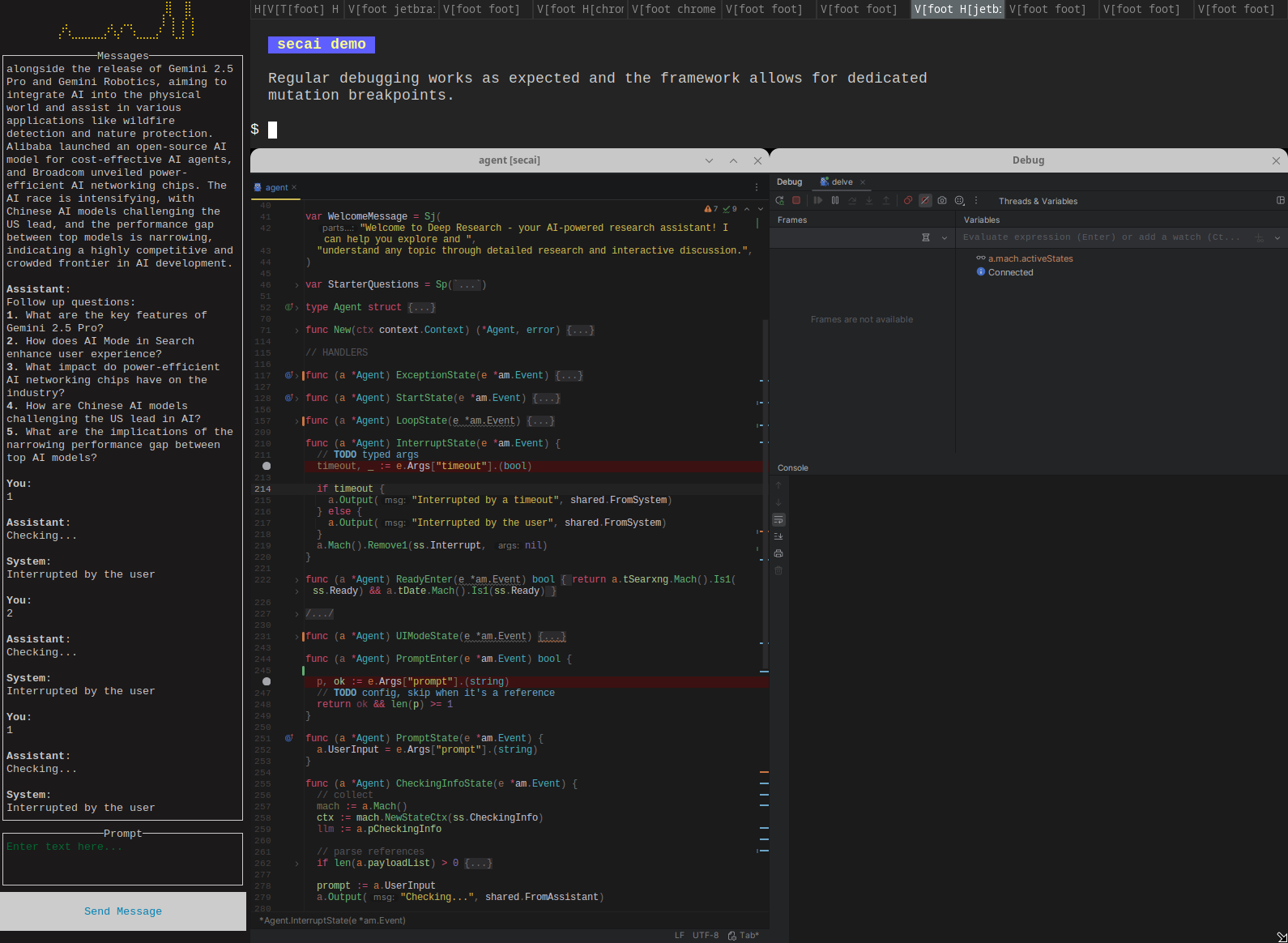

func (*Agent) InterruptedState ¶ added in v0.2.0

func (*Agent) Log ¶

Log will push a log entry to Logger as Info() and optionally the machine log with SECAI_AM_LOG. Log accepts the same convention of arguments as slog.Info.

func (*Agent) OpenAI ¶

func (a *Agent) OpenAI() *instructor.InstructorOpenAI

func (*Agent) Output ¶

Output is a sugar for adding a schema.AgentStatesDef.Msg mutation.

func (*Agent) PromptState ¶

func (*Agent) RequestingLLMEnd ¶

func (*Agent) RequestingToolEnd ¶

func (*Agent) ResumeState ¶ added in v0.2.0

func (*Agent) SetOpenAI ¶

func (a *Agent) SetOpenAI(c *instructor.InstructorOpenAI)

func (*Agent) Start ¶

Start is a sugar for adding a schema.AgentStatesDef.Start mutation.

func (*Agent) StartState ¶

type AgentAPI ¶ added in v0.2.0

type AgentAPI interface {

Output(txt string, from shared.From) am.Result

Mach() *am.Machine

SetMach(*am.Machine)

SetOpenAI(c *instructor.InstructorOpenAI)

OpenAI() *instructor.InstructorOpenAI

Start() am.Result

Stop(disposeCtx context.Context) am.Result

Log(txt string, args ...any)

Logger() *slog.Logger

BaseQueries() *db.Queries

}

AgentAPI is the top-level public API for all agents to overwrite.

type Document ¶

type Document struct {

// contains filtered or unexported fields

}

func NewDocument ¶

func (*Document) AddToPrompts ¶ added in v0.2.0

type Prompt ¶

type Prompt[P any, R any] struct { Conditions string Steps string Result string SchemaParams P SchemaResult R // number of previous messages to include HistoryMsgLen int State string A AgentAPI // contains filtered or unexported fields }

func (*Prompt[P, R]) AppendHistOpenAI ¶

func (p *Prompt[P, R]) AppendHistOpenAI(msg *openai.ChatCompletionMessage)

func (*Prompt[P, R]) HistCleanOpenAI ¶ added in v0.2.0

func (p *Prompt[P, R]) HistCleanOpenAI()

func (*Prompt[P, R]) HistOpenAI ¶

func (p *Prompt[P, R]) HistOpenAI() []openai.ChatCompletionMessage

func (*Prompt[P, R]) MsgsOpenAI ¶

func (p *Prompt[P, R]) MsgsOpenAI() []openai.ChatCompletionMessage

type PromptApi ¶

type PromptApi interface {

AddTool(tool ToolApi)

AddDoc(doc *Document)

HistOpenAI() []openai.ChatCompletionMessage

AppendHistOpenAI(msg *openai.ChatCompletionMessage)

HistCleanOpenAI()

}

type PromptSchemaless ¶

Directories

¶

Directories

¶

| Path | Synopsis |

|---|---|

|

examples

|

|

|

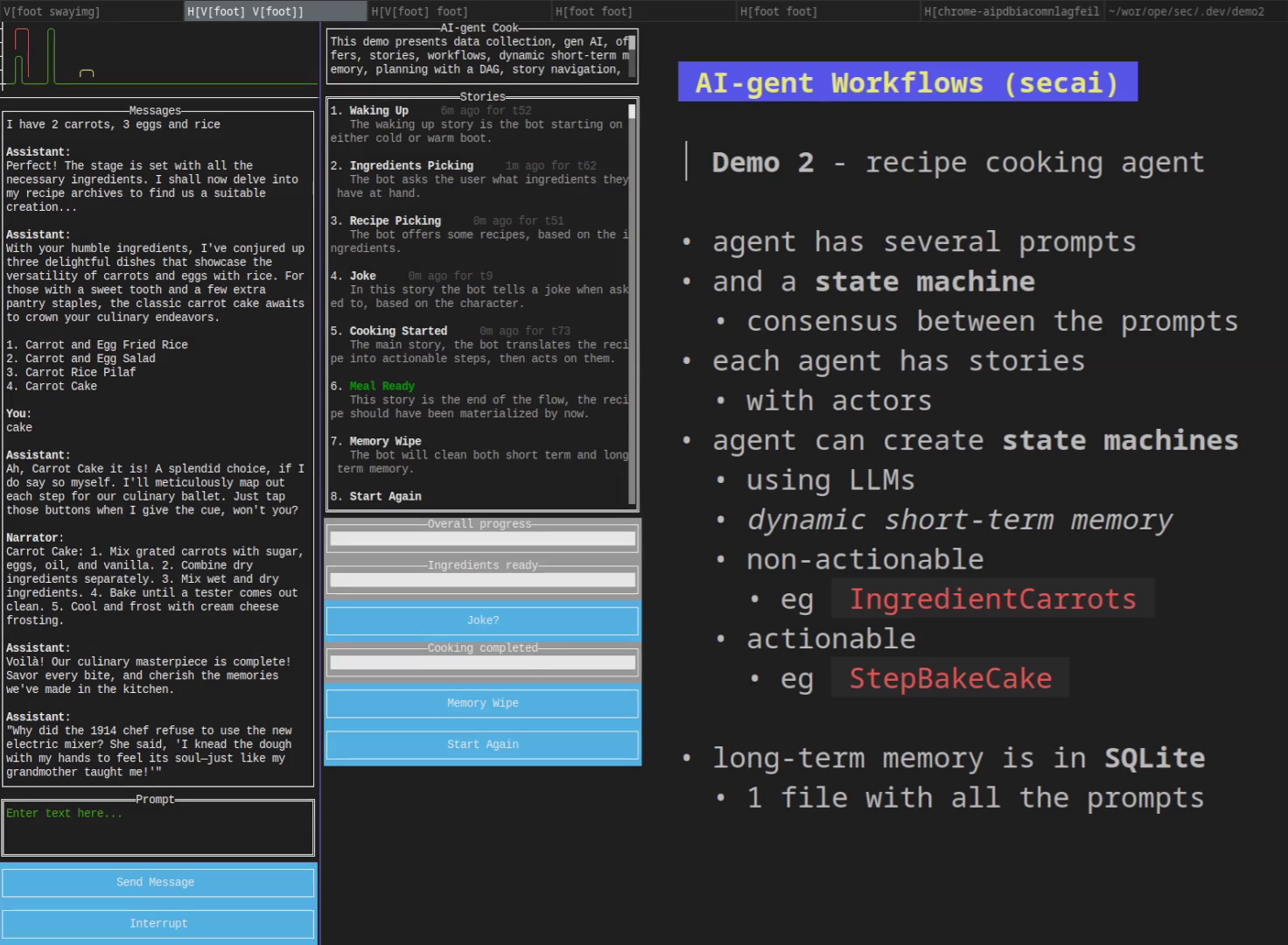

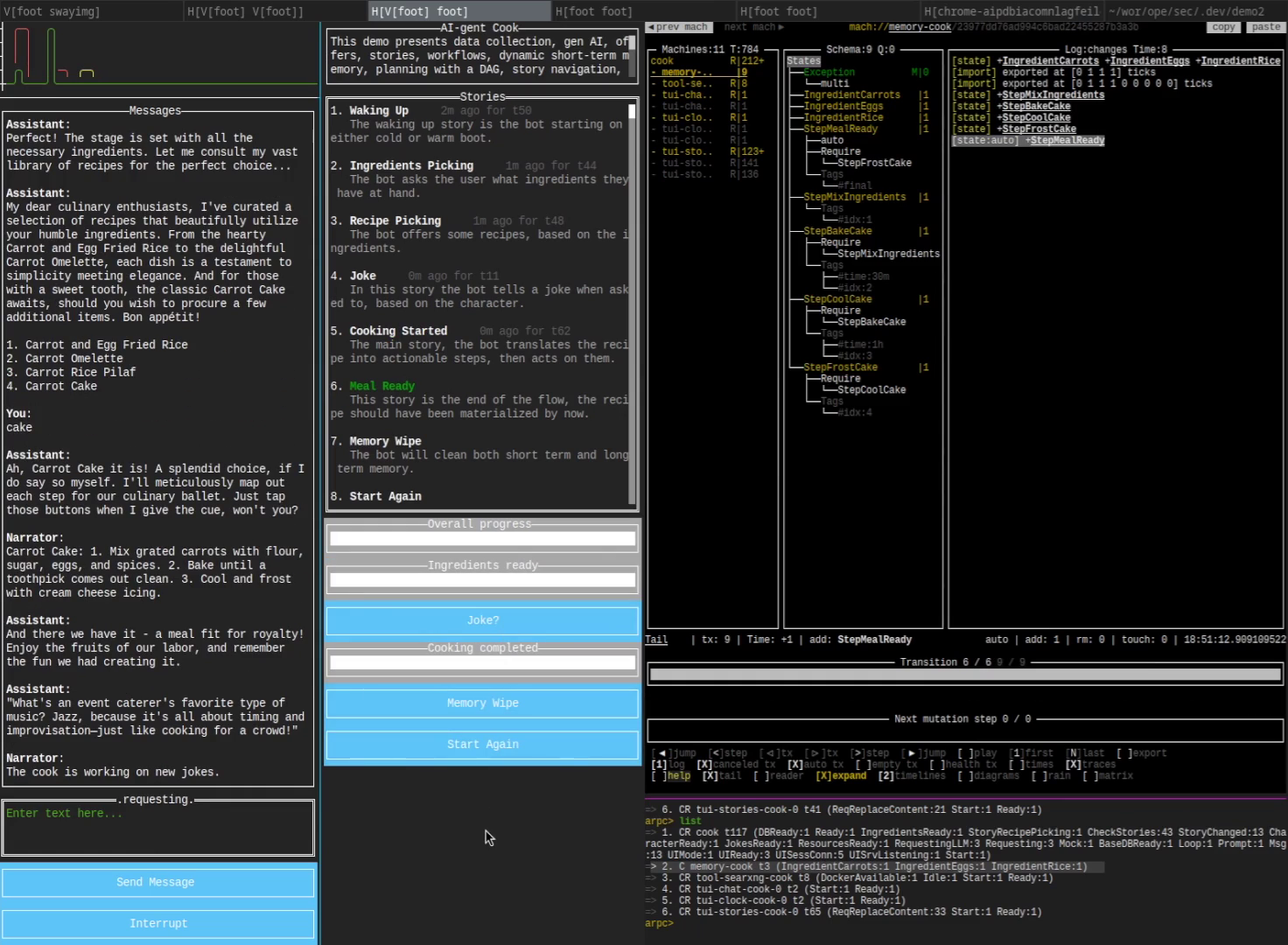

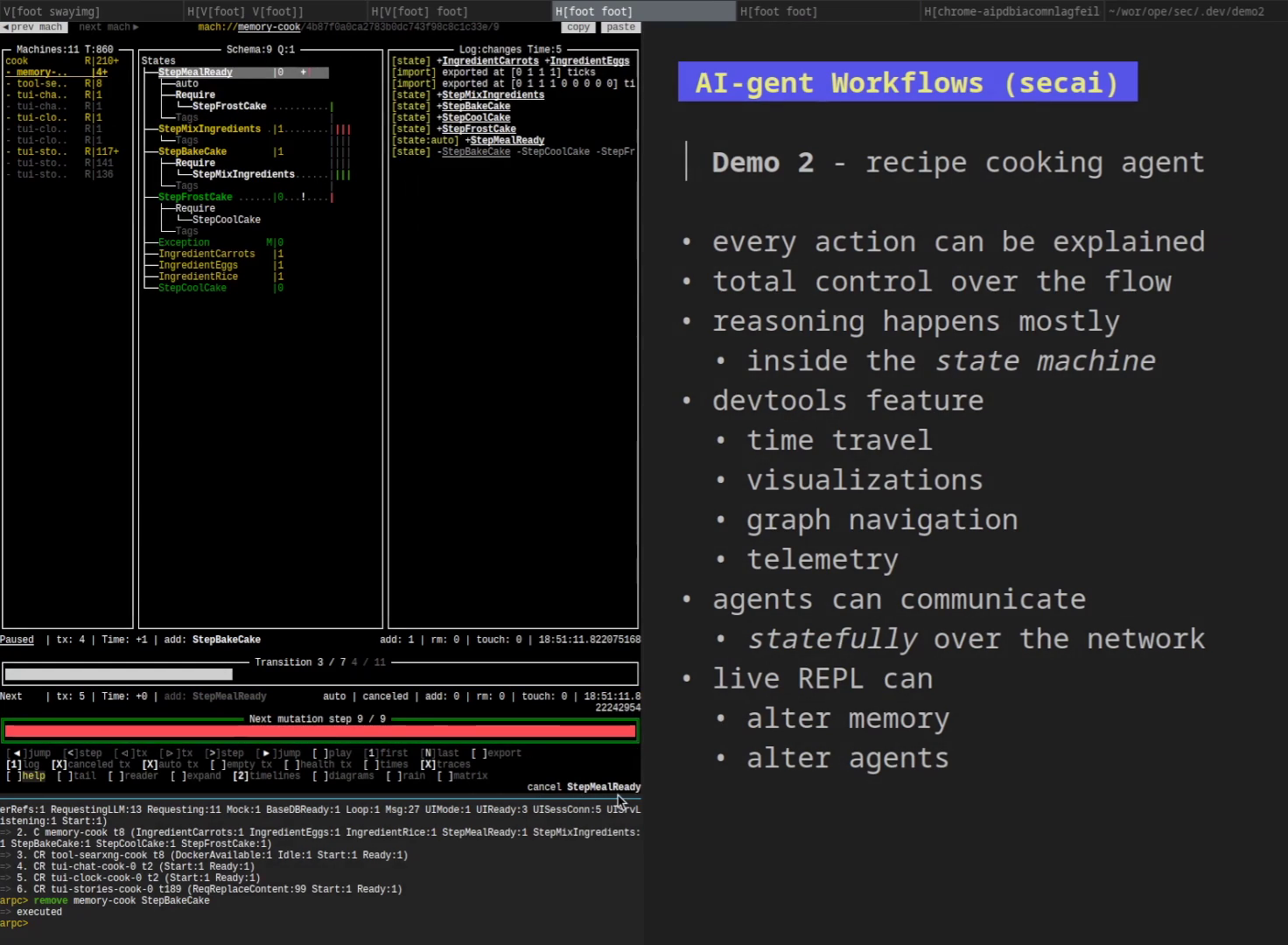

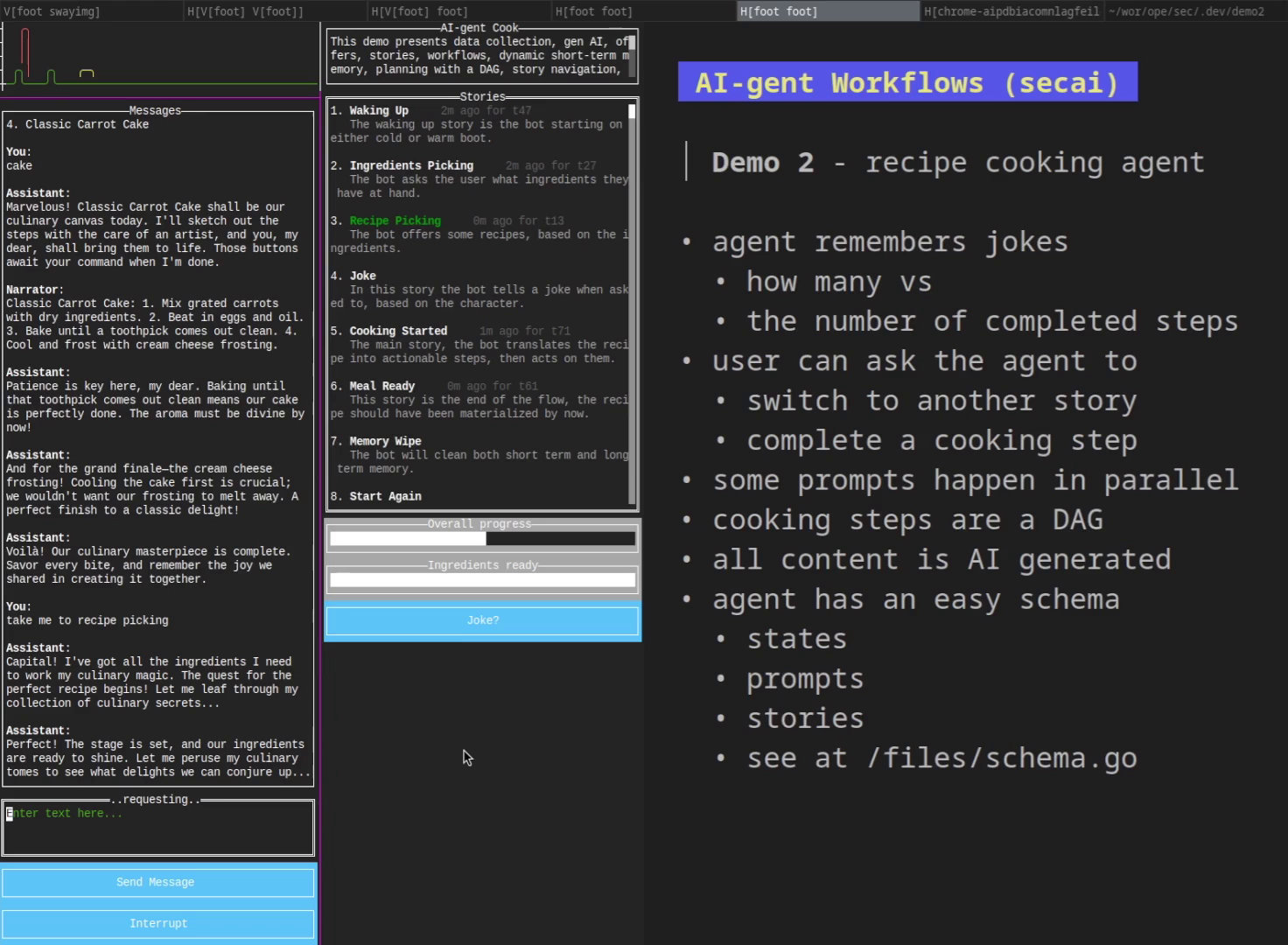

cook

Package cook is a recipe-choosing and cooking agent with a gen-ai character.

|

Package cook is a recipe-choosing and cooking agent with a gen-ai character. |

|

cook/cmd

command

|

|

|

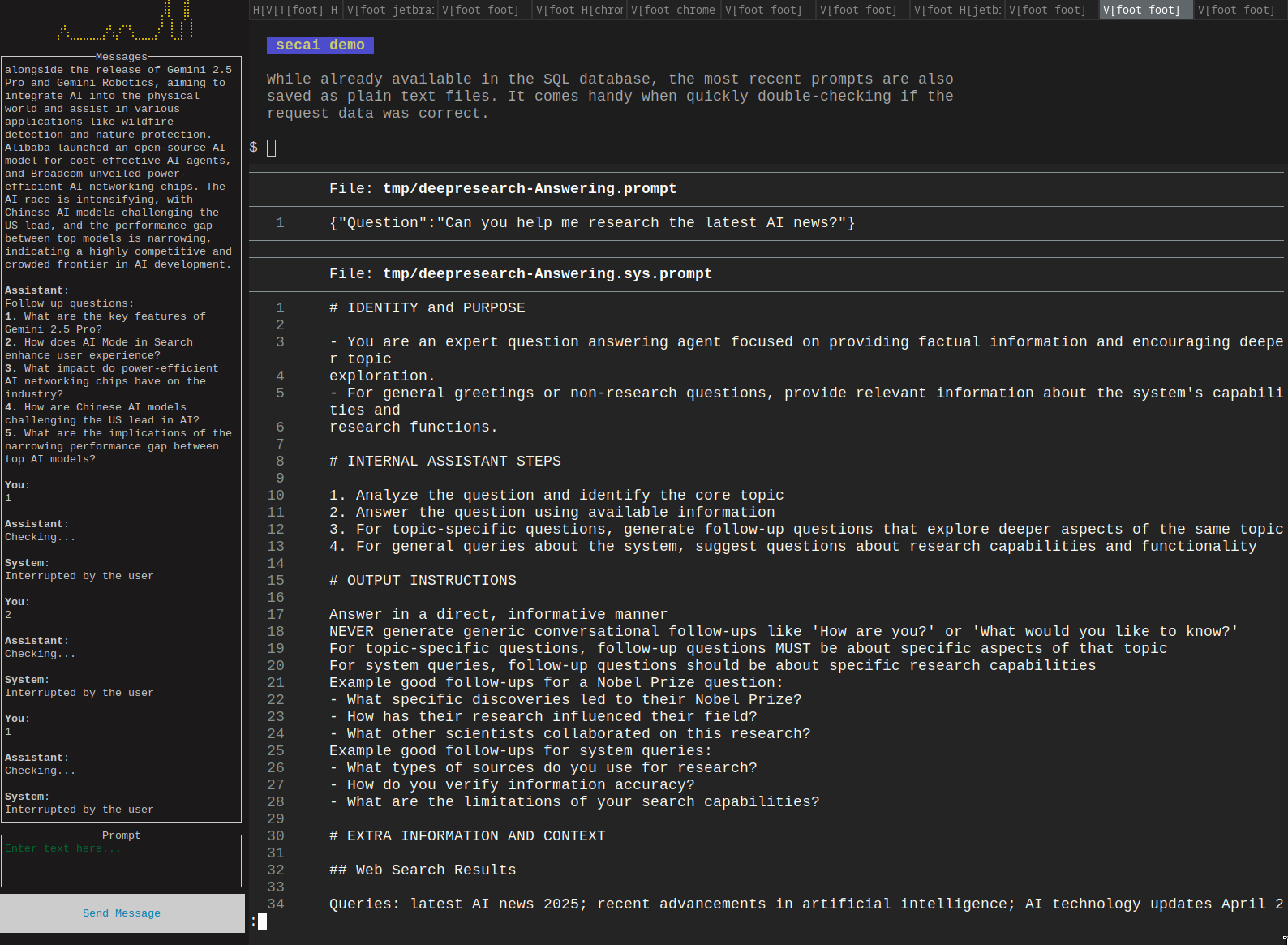

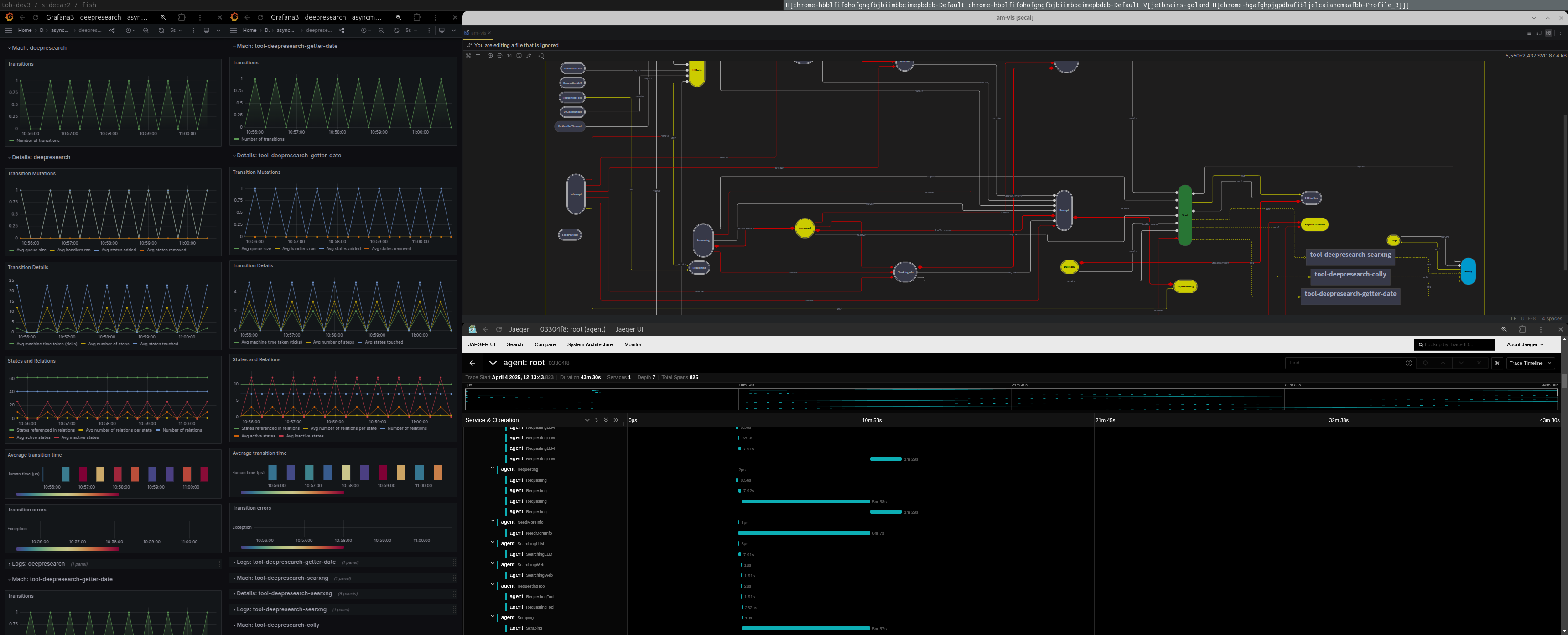

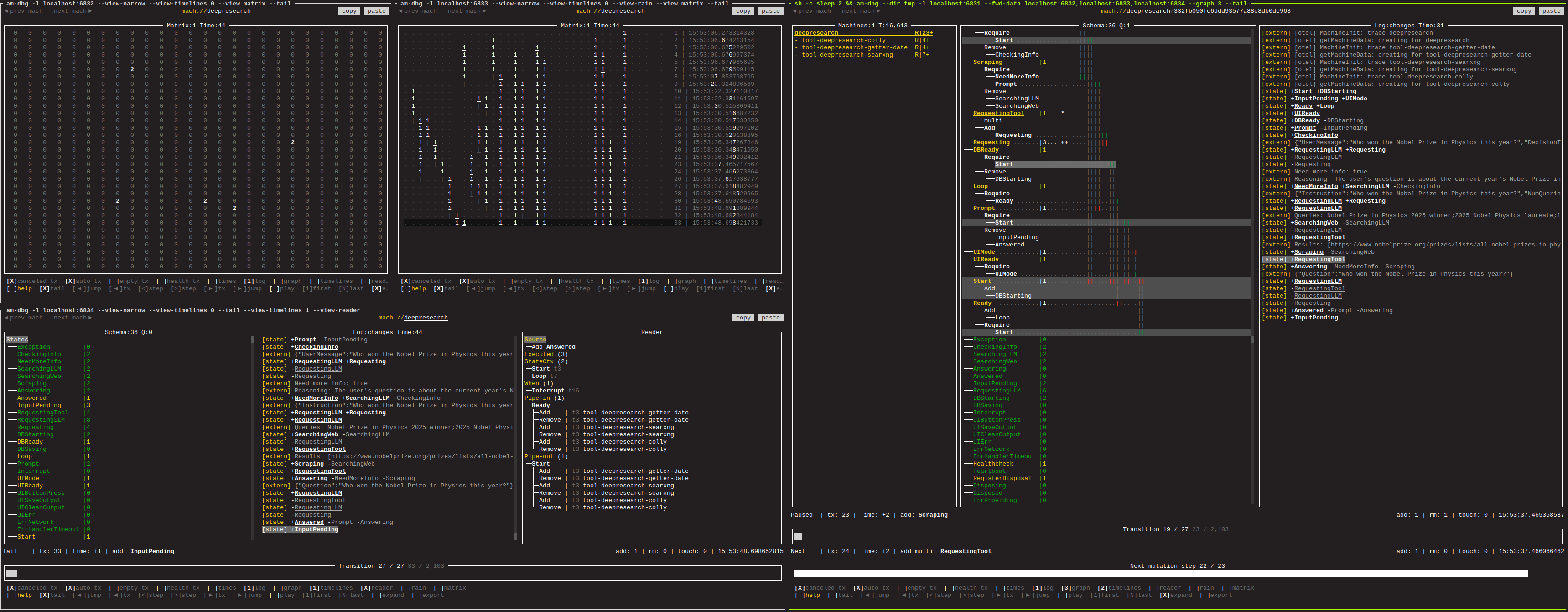

research

Package deepresearch is a port of atomic-agents/deepresearch to secai.

|

Package deepresearch is a port of atomic-agents/deepresearch to secai. |

|

research/cmd

command

|

|

|

Package llmagent is a base agent extended with common LLM prompts.

|

Package llmagent is a base agent extended with common LLM prompts. |

|

Package schema contains a stateful schema-v2 for Agent.

|

Package schema contains a stateful schema-v2 for Agent. |

|

tools

|

|

Click to show internal directories.

Click to hide internal directories.